Nvidia has unveiled BlueField-4 STX, a new reference architecture designed to address a critical performance limitation in artificial intelligence: the speed at which AI agents can access and process data. The core problem isn’t the AI models themselves, but the inability of traditional storage systems to keep pace with the demands of modern inference. This bottleneck impacts AI’s ability to maintain coherent “working memory” during complex tasks, tool calls, and multi-step reasoning processes.

The Problem with Current Storage

Large language models (LLMs) rely on a key-value (KV) cache to store intermediate calculations, allowing them to avoid recomputing the same information repeatedly. As AI agents handle longer contexts and more complex tasks, this cache grows exponentially. When that cache must access slow, traditional storage, inference speeds drop, and GPU utilization suffers. This is not a theoretical issue: AI performance is directly limited by how quickly it can retrieve previously processed data.

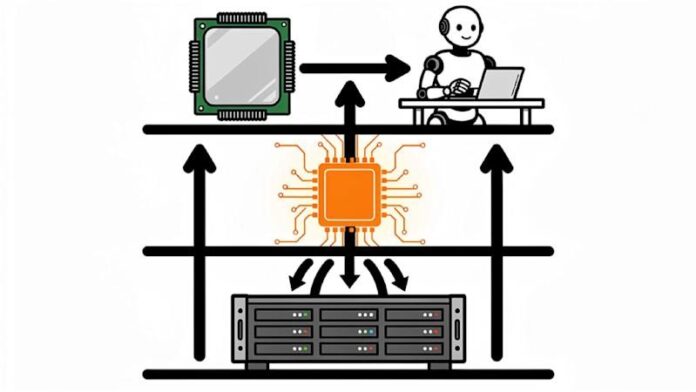

BlueField-4 STX: A Context Memory Layer

Nvidia’s solution is not a product they sell directly, but a reference design for storage partners. BlueField-4 STX inserts a dedicated “context memory layer” between GPUs and conventional storage. The architecture combines Nvidia’s Vera CPU with the ConnectX-9 SuperNIC, running on Spectrum-X Ethernet networking and programmable through Nvidia’s DOCA software platform. The goal is simple: keep the KV cache accessible at speeds that match GPU processing. The first implementation is the CMX context memory storage platform, which extends GPU memory with a high-performance layer for storing and retrieving KV cache data.

Partner Ecosystem & Availability

Nvidia is distributing this reference architecture to its storage partners to build AI-native infrastructure. The company has secured commitments from major players including Cloudian, Dell Technologies, HPE, IBM, NetApp, VAST Data, and WEKA. Cloud providers such as CoreWeave, Mistral AI, and Oracle Cloud Infrastructure have also committed to adopting STX for context memory storage.

Platforms based on STX are expected to become available from partners in the second half of 2026. The combination of enterprise storage incumbents and AI-native cloud providers signals Nvidia’s intent to position STX as a new standard for AI infrastructure.

Real-World Performance Gains

IBM is already demonstrating the impact of this approach. Their Storage Scale System 6000, certified on Nvidia DGX platforms, has shown significant improvements in data refresh cycles for structured analytics workloads. In a proof-of-concept with Nestlé, a data refresh across 186 countries and 44 tables dropped from 15 minutes to just three minutes, yielding 83% cost savings and a 30x price-performance improvement. While this example focuses on structured data, it illustrates the broader point: the storage layer is often the primary constraint in enterprise AI deployments.

Why This Matters

The shift towards context-optimized storage is critical because general-purpose storage wasn’t designed for the latency requirements of agentic AI workloads. As AI becomes more integrated into enterprise operations, the storage layer will become a first-class infrastructure decision, not an afterthought to GPU procurement. Nvidia claims STX delivers 5x token throughput, 4x energy efficiency, and 2x data ingestion speed compared to traditional CPU-based storage, though specific baseline configurations for these measurements remain unspecified.

In conclusion, Nvidia’s BlueField-4 STX represents a fundamental change in how enterprises approach AI infrastructure. By addressing the storage bottleneck, the company is paving the way for faster, more efficient, and more scalable AI deployments across a wide range of industries.